The Moral Calculus of Effective Altruism

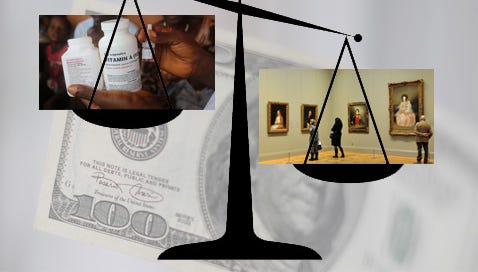

Consider the following decision problem you’re facing:

Case #1: You have a certain monetary sum X that you’re considering allocating in either of the following two ways: (a) To give X to a well-known charity C fighting malaria in Sub-Saharan Africa; (b) To give X to an NGO N working with local governments to improve education conditions. If you choose (a), you’ve strong evidence that X will directly help to prevent 10 cases of malaria by funding bed nets. If you choose (b), you strongly believe that X will not be harmful but also that, at best, it will have a marginal impact that will benefit persons only several years in the future thanks to the improvement in education conditions.

Over the last decade, proponents of Effective Altruism (EA) have made a strong case for the claim that, in situations like Case #1, decisions about how to donate contribute to alleviating poverty and more generally to improve living conditions should be exclusively determined by effectiveness considerations. Using empirical methods coming from social sciences, especially economics (cost-effectiveness analysis, randomized controlled trials), effective altruists have created organizations that provide relevant information to allocate monetary resources to the most efficient charities, i.e., those that are the most effective at transforming money into lives saved. Unsurprisingly, EAs would recommend you to choose (a) in Case #1 as your X dollars invested would save 10 lives almost for sure, while they would only marginally contribute to improving education conditions, an improvement which, maybe, could indirectly save lives in the future, but with a high degree of uncertainty.

That, conditional on the fact that one wants to give money to improve the lives of the poorest people, donations should be made based on an efficiency criterion, may seem to be reasonable. It has undesirable side effects though. It may for instance unduly favor charities which work on problems that are easily amenable to cost-effectiveness measures or controlled experiments. It also reflects a very impartial and ultimately impersonal view of philanthropy and altruism that is blind to the importance of communitarian and other special relationships that are often at the roots of the willingness to give. But a more significant problem that has been largely pointed out by critics is that EA seems committed to an approach directed toward the treatment of the symptoms rather than the causes of poverty. Economists, in particular, are likely to point out that fighting poverty requires targeting the malfunctioning institutions that are responsible for the disastrous living conditions in parts of the world. Instead of allocating money to prevent or to solve problems created by these institutions, it would be better over the long run to improve or to change these institutions. Interestingly, this argument holds by using the same measures as those used by EAs, such as lives saved or QALYs. Consider indeed the following variation of Case #1.

Case #2: Consider the two following possible worlds. In world W1, N persons each decide to give X to C. As a result, 10N lives are saved. In world W2, N persons decide to give X to N. The combined effect results in the significant improvement of education institutions, with an estimate of lives saved (or any equivalent measure) over the 20 following years which are well above 10N.

Recently, some EAs have admitted that in situations like case #2, it might be permissible or even obligatory to allocate monetary resources to organizations working at the political level to improve institutions. After all, by the evaluative standards of EA, there are strong reasons to consider that W2 is better than W1. Some EAs may of course resist this assessment. First, it may be argued that as an individual, you cannot choose the global allocation of resources. Giving to N in Case #2 is cost-effective only if a sufficient proportion of the N-1 other persons also do the same. This is a classical collective action problem that may indeed prevent the best outcome to happen. There is a threshold such that only if you expect that at least N* persons will give to N it is rational also for you to give to N. Second, the uncertainty is bigger in W2 than in W1. Not only giving to N works only if others also give to N. We may also reasonably consider that at the moment you’re making your decision, you’re uncertain about the combined effect of the donations on the improvement of institutions and, indirectly, on the number of lives saved. Risk-averse EAs may then prefer the (almost) certain W1. Finally, while the effects of donations to C are almost immediate, those of donations to N are long-term. Intertemporal preferences for the present and near may then favor W1, though it should be noted that this last preference conflicts with the impartial (interpersonally and intertemporally) stance that EAs like to put forward as the only morally acceptable one.

Suppose however that despite these difficulties, EAs grant that W2 is better overall than W1. Consider Case #3:

Case #3: We consider two possible worlds W3 and W4. W3 is like W4, except that the magnitudes are 100 times bigger. 100N persons decide to give each X to N or similar organizations. There are diminishing marginal returns, but the estimated number of lives saved over 20 years is big, probably well above 1000N. In W4, the same amount of money (100NX) is instead allocated to organizations dedicated to preventing the realization of existential risks. The probability p of realization of such risks over the next 100 years in case of inaction is very low and the marginal effect of giving 100NX on p is very difficult to estimate. Still, we can be confident that it contributes to diminishing p. If an existential risk realizes, it will cost billions of lives, including lives that will never exist.

The Oxford philosopher Nick Bostrom has estimated that about 10^54 life-years could exist in the future if we master inter-solar-systems travel. Even if this is not the case, the number of future lives that would exist if we successfully prevented existential risks is still astronomical. Even after having weighted this number based on the high uncertainty of the marginal effect of spending money on the prevention of these risks and the uncertainty about the number of future lives that will exist, it appears that the expected number of lives saved is far bigger in W4 than in W3.

The conclusion is thus the following. If we accept that the moral calculus of EA can be tweaked to account that, at least in a significant number of cases, it is permissible to allocate money to organizations that act at the political level to improve institutions, then it must be permissible, if not mandatory, to allocate this money to prevent existential risks. This is indeed the conclusion reached by some parts of the EA community. Is this conclusion morally acceptable? I guess that most persons will answer negatively. Existential risks should not be underestimated and research in psychology and behavioral economics shows that we are bad at estimating and accounting for low probability events. But if EAs stick to their moral calculus, they should be led to the conclusion that virtually all the resources should be allocated to the prevention of these risks. It is still possible to twist the calculus even more to avoid this conclusion. On the one hand, it could be argued that priority should be given to present lives and present sufferings over future lives and future sufferings. As for Case #2 above however, it introduces a time preference that conflicts with the ethical commitments of most EAs. On the other hand, the conclusion could be circumvented by adopting what population ethicists call a “person-affecting view”. On this view, you cannot benefit or harm someone who does not exist. Failing to prevent existential risks would result in the non-existence of lives that would have existed otherwise. But, on this view, this failure would not harm these potential persons. The fact they do not receive any benefits because of the realization of an existential risk should not enter the moral calculus. However, beyond the fact that the “person-affecting view” leads to counterintuitive results in some cases, it now leads to the opposite conclusion: existential risks can be altogether ignored. This includes the potentially disastrous effects due to climate change that extend to future and non-yet existing generations. Another conclusion that is hard to accept!